Explain ResNet50 ImageNet classification using Partition explainer

This notebook demonstrates how to use SHAP to explain image classification models. In this example we are explaining the output of ResNet50 model for classifying images into 1000 ImageNet classes.

[1]:

import json

import numpy as np

from tensorflow.keras.applications.resnet50 import ResNet50, preprocess_input

import shap

Loading Model and Data

[2]:

# load pre-trained model and data

model = ResNet50(weights="imagenet")

X, y = shap.datasets.imagenet50()

[3]:

# getting ImageNet 1000 class names

url = "https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json"

with open(shap.datasets.cache(url)) as file:

class_names = [v[1] for v in json.load(file).values()]

# print("Number of ImageNet classes:", len(class_names))

# print("Class names:", class_names)

SHAP ResNet50 model explanation for images

Build a partition explainer with: - the model (a python function) - the masker (a python function) - output names (a list of names of the output classes)

A quick run with a few evaluations

[8]:

# python function to get model output; replace this function with your own model function.

def f(x):

tmp = x.copy()

preprocess_input(tmp)

return model(tmp)

# define a masker that is used to mask out partitions of the input image.

masker = shap.maskers.Image("inpaint_telea", X[0].shape)

# create an explainer with model and image masker

explainer = shap.Explainer(f, masker, output_names=class_names)

# here we explain two images using 500 evaluations of the underlying model to estimate the SHAP values

shap_values = explainer(

X[1:3], max_evals=100, batch_size=50, outputs=shap.Explanation.argsort.flip[:4]

)

[ ]:

shap_values.shape

[11]:

np.tile(np.array(shap_values.output_names), 2)

[11]:

array(['American_egret', 'crane', 'little_blue_heron', 'flamingo',

'American_egret', 'crane', 'little_blue_heron', 'flamingo'],

dtype='<U17')

[7]:

np.array(shap_values.output_names).shape[0]

[7]:

4

[11]:

shap_values.output_dims

[11]:

(4,)

Explainer options:

Above image masker uses a blurring technique called “inpaint_telea”. There are alternate masking options available to experiment with such as “inpaint_ns” and “blur(kernel_xsize, kernel_xsize)”.

Recommended number of evaluations is 300-500 to get explanations with sufficient granularity for the super pixels. More the number of evaluations, more the granularity but also increases run-time.

Note:

outputs=shap.Explanation.argsort.flip[:4]has been used in the code above for getting SHAP values because we want to get the top 4 most probable classes for each image i.e. top 4 classes with decreasing probability. Hence, a flip argsort sliced by 4 has been used.

Visualizing SHAP values output

[6]:

# output with shap values

shap.image_plot(shap_values)

[24]:

shap_values[0].base_values.shape

[24]:

(1000,)

[31]:

shap_values[0].base_values

[31]:

array([3.98265023e-04, 4.48955398e-05, 5.20897702e-05, 2.04479627e-04,

5.67642674e-05, 1.37821451e-04, 1.43101468e-04, 2.34555133e-04,

1.65241177e-03, 8.71808079e-05, 2.73833750e-04, 1.03291946e-04,

1.03254220e-04, 2.66912321e-05, 9.38569065e-05, 4.04711056e-04,

3.30887298e-04, 8.17410037e-05, 4.58840441e-05, 7.76972214e-04,

9.79037606e-04, 1.82875578e-04, 6.26673936e-05, 4.15584684e-04,

1.75147157e-04, 2.43703653e-05, 1.90513470e-04, 4.63949953e-04,

3.72401183e-03, 2.16879227e-04, 4.97241551e-03, 2.01521367e-01,

4.61910939e-04, 3.70553578e-04, 1.67468010e-04, 3.31375678e-03,

1.03218982e-03, 1.10009874e-04, 4.62198048e-04, 2.72900041e-04,

1.63967852e-04, 1.55510512e-04, 7.61000774e-05, 8.27621043e-05,

1.24086940e-03, 5.44384784e-05, 1.88978534e-04, 1.65600795e-03,

4.68490616e-04, 2.02517796e-04, 2.24879230e-04, 1.53284360e-04,

7.28016312e-04, 2.18322930e-05, 9.70595356e-05, 2.40055524e-04,

8.75858896e-05, 8.05352684e-05, 5.24058286e-03, 4.47012542e-04,

1.37802403e-04, 5.53620885e-05, 1.67022704e-03, 2.84969807e-04,

8.81938729e-04, 1.68564642e-04, 1.31366367e-04, 9.56201809e-04,

2.78153806e-04, 7.04826234e-05, 1.15577168e-04, 5.03735617e-04,

3.23968381e-03, 2.96114665e-03, 2.07320263e-04, 1.06132834e-03,

2.17621040e-04, 7.06988329e-04, 1.62530720e-04, 2.02930160e-03,

8.79141953e-05, 1.91258034e-03, 1.58560916e-03, 1.76169444e-03,

6.13501586e-04, 1.01693309e-04, 1.22117897e-04, 2.70858320e-04,

3.35622499e-05, 1.05499045e-03, 4.14801470e-05, 1.82900709e-04,

5.84131012e-05, 1.04882440e-03, 2.19639414e-03, 1.43847588e-04,

8.00978305e-05, 2.61449284e-04, 5.75504149e-04, 1.17039803e-04,

3.48173926e-04, 9.26456341e-05, 2.51709687e-04, 4.78086266e-04,

2.59803119e-03, 1.59291131e-03, 1.66199796e-04, 1.56387687e-04,

1.11853424e-03, 1.68977969e-03, 2.42620936e-05, 1.18718512e-04,

3.65030882e-03, 5.25807380e-04, 2.50643864e-03, 4.63505945e-04,

3.17113445e-05, 2.52411381e-04, 8.77027574e-04, 5.45950279e-05,

8.71916054e-05, 4.65254387e-04, 9.42074548e-05, 3.14210588e-03,

6.98393560e-05, 1.40927164e-04, 2.92200624e-04, 1.27284331e-02,

1.96964378e-04, 3.43363092e-04, 6.04275789e-04, 2.46494092e-05,

4.61999938e-04, 2.57244479e-04, 4.37212363e-03, 1.17149972e-03,

4.89713420e-05, 3.27316113e-04, 4.47746861e-04, 2.02462077e-04,

8.61583801e-04, 1.34167858e-04, 3.71429429e-04, 6.87520718e-04,

1.40331360e-03, 1.22133445e-03, 1.66886639e-05, 1.42857461e-04,

1.14866011e-02, 3.36653134e-03, 3.29846021e-04, 4.72038257e-04,

1.00409007e-03, 1.29732513e-03, 3.61699349e-04, 5.30289579e-03,

5.18341956e-04, 4.70881874e-04, 6.44493266e-05, 1.05675921e-04,

2.06455775e-03, 2.58339016e-04, 4.76116576e-04, 1.20431083e-04,

1.24215968e-02, 2.86966406e-05, 7.48898237e-05, 1.71770240e-04,

1.53026136e-04, 2.45231204e-04, 1.14865397e-04, 3.22352367e-04,

3.37602425e-04, 6.14294258e-04, 9.70410256e-05, 1.59063807e-03,

5.08750509e-03, 2.67939991e-04, 1.43872213e-03, 1.19161239e-04,

2.46804469e-04, 2.98232539e-04, 4.77343885e-04, 4.77507783e-05,

4.32613585e-03, 1.01641439e-04, 6.29403366e-05, 3.28598602e-04,

4.87449579e-03, 1.87227430e-04, 5.53444610e-04, 5.19249064e-04,

1.10896956e-03, 3.43542802e-03, 1.74651941e-04, 2.22513816e-04,

2.95794592e-03, 2.09766687e-04, 4.75166926e-05, 2.88550509e-04,

6.87570893e-04, 2.06802375e-04, 1.71519205e-04, 2.70951336e-04,

9.43710693e-05, 1.12773397e-03, 9.82338935e-03, 3.32266139e-03,

2.19096444e-04, 2.17689230e-05, 1.61327654e-04, 1.06600019e-04,

1.96517725e-03, 5.96507452e-04, 7.03871483e-04, 1.59394997e-03,

2.64894590e-03, 1.60959898e-04, 6.20000577e-03, 6.26118344e-05,

1.64568322e-04, 9.34288691e-05, 1.06487481e-04, 2.47626798e-04,

7.09660351e-03, 1.91016507e-03, 7.00892007e-04, 2.34331994e-04,

2.19726609e-03, 1.90845807e-04, 4.88849764e-04, 5.34049235e-03,

1.82612066e-03, 1.67027392e-04, 1.59819072e-04, 5.94002078e-04,

1.34168877e-04, 9.71634930e-04, 2.19330061e-04, 2.32918101e-04,

2.32211058e-03, 8.56369548e-03, 1.04254432e-04, 1.94690569e-04,

1.75361261e-02, 3.74004652e-04, 2.08059035e-04, 1.98756400e-02,

3.35199817e-04, 7.62398820e-04, 4.94323089e-04, 9.44103158e-05,

7.72377680e-05, 2.18191394e-03, 9.12886608e-05, 1.49337298e-04,

5.48757566e-03, 2.28227451e-04, 2.91966484e-04, 3.75757751e-04,

3.29240400e-04, 4.76886606e-04, 1.14875911e-04, 2.97170162e-04,

5.42078109e-04, 1.10409739e-04, 9.61581129e-04, 2.99977983e-05,

7.14230409e-04, 2.44811999e-05, 1.56077331e-05, 8.35507381e-05,

1.11137240e-04, 2.19001100e-04, 1.94354588e-03, 3.41678562e-04,

2.43427046e-03, 3.74987503e-05, 6.12538948e-04, 3.07665410e-04,

9.51292086e-03, 1.96635447e-04, 2.98951869e-03, 1.51283562e-03,

1.11732305e-04, 3.72940012e-05, 1.21334312e-03, 4.69207989e-05,

1.40990349e-04, 3.28055816e-04, 6.17932164e-05, 9.55878990e-04,

7.26847284e-05, 2.80747423e-04, 7.74153834e-03, 2.19893031e-04,

1.46946550e-04, 8.18398767e-05, 9.75400544e-05, 3.98906486e-05,

9.15985182e-03, 6.38652884e-04, 8.55517923e-04, 5.58320142e-04,

2.84550682e-04, 6.79018209e-04, 1.22444949e-03, 1.38428388e-02,

9.44111234e-05, 9.13376061e-05, 1.90601801e-04, 2.07817706e-04,

9.78715834e-04, 5.39315515e-04, 9.03732842e-04, 2.02737399e-04,

2.44897928e-05, 1.55207381e-04, 7.26549304e-04, 2.10206723e-04,

7.31480657e-04, 3.42042244e-04, 2.21371403e-04, 4.17837378e-04,

6.34400232e-04, 7.87110184e-05, 1.63991455e-04, 1.89242372e-03,

2.18088622e-03, 2.73842219e-04, 1.27485720e-04, 6.10379575e-05,

6.02986023e-04, 1.50342930e-05, 3.14068719e-04, 1.06195104e-03,

2.15661494e-04, 8.36609805e-04, 5.64410839e-05, 6.65351326e-05,

2.11620267e-04, 1.86994192e-04, 1.15989812e-03, 7.73980646e-05,

7.99473783e-05, 6.08985603e-04, 1.25390972e-04, 5.11682360e-03,

5.26337171e-05, 2.94670346e-04, 1.78296075e-04, 1.97027315e-04,

2.16452434e-04, 3.26410867e-04, 1.02113234e-04, 1.52413466e-03,

3.93337468e-05, 6.38024008e-04, 2.47433345e-04, 3.88692890e-04,

3.44268419e-03, 2.77661893e-05, 1.42515026e-04, 1.01953614e-04,

5.43837762e-03, 8.35901155e-05, 1.35738961e-03, 4.04788414e-04,

1.58322830e-04, 1.16253315e-04, 1.26562698e-03, 1.86057645e-04,

5.57620137e-04, 1.01343765e-04, 2.88358642e-05, 4.74227796e-04,

3.16448684e-04, 4.19742246e-05, 1.08359178e-04, 1.04418854e-04,

2.03641539e-04, 4.04073711e-04, 7.33445268e-05, 3.12642660e-03,

1.77940441e-04, 1.04366243e-03, 2.02374431e-04, 5.83104083e-05,

2.26815441e-03, 7.83597527e-04, 8.78596678e-04, 2.20475224e-04,

3.20527288e-05, 8.91639676e-04, 2.62770463e-05, 2.32063496e-04,

1.86159959e-04, 2.52828421e-03, 1.35955503e-04, 3.13804485e-05,

4.37561609e-03, 6.02974789e-04, 5.17095978e-05, 1.81379146e-04,

1.14109635e-03, 8.31345329e-04, 2.78152613e-04, 6.97735872e-04,

1.05109788e-03, 8.61559995e-04, 8.07119440e-03, 3.48262605e-04,

2.55202758e-05, 5.52419981e-04, 6.68813955e-05, 3.29635768e-05,

7.73706532e-04, 3.64235049e-04, 1.51698780e-03, 2.81690009e-04,

4.75254655e-03, 6.69377157e-04, 9.54573101e-04, 1.08042186e-04,

5.68635260e-05, 4.24618163e-04, 2.58263346e-04, 1.45405869e-03,

1.02591919e-04, 1.55952512e-04, 1.85346958e-04, 7.03747282e-05,

2.41437432e-04, 2.35192638e-04, 1.24183003e-04, 1.20975601e-04,

7.09347078e-05, 6.99766242e-05, 7.71764317e-05, 1.89607745e-04,

7.93690444e-04, 1.37692579e-04, 7.09580781e-05, 5.91546879e-04,

2.90565134e-04, 2.89046846e-04, 5.19869197e-03, 4.70197119e-04,

1.15571762e-04, 9.94998263e-04, 2.57296371e-04, 4.94676409e-03,

5.00711962e-04, 2.99575167e-05, 1.86757010e-04, 6.52849994e-05,

6.04235218e-04, 4.68017897e-05, 3.87711974e-04, 4.72599531e-05,

6.97334204e-03, 2.53278966e-04, 2.60601024e-04, 8.47871488e-05,

3.23877830e-05, 1.19668525e-03, 5.75865619e-03, 4.11593704e-04,

1.53028246e-04, 1.79754803e-04, 6.80635159e-04, 1.39376134e-04,

6.17261903e-05, 2.87276634e-04, 8.66111950e-05, 1.57140807e-04,

9.42889383e-05, 2.58421525e-04, 5.66013405e-05, 2.35475280e-04,

1.04127292e-04, 6.85896521e-05, 7.02898469e-05, 1.98832509e-04,

1.16414631e-04, 3.62440856e-04, 4.04755992e-04, 1.61688542e-04,

8.00234993e-05, 5.06251745e-05, 1.01898695e-04, 1.85255765e-03,

2.10804399e-03, 3.95909999e-04, 1.04698156e-04, 1.23862468e-04,

2.01316288e-04, 2.67114752e-04, 1.09853136e-04, 1.04562007e-02,

1.44658901e-04, 1.38847245e-04, 1.86406018e-04, 2.91111821e-04,

2.58459095e-05, 1.76549394e-04, 5.91578195e-04, 1.80155170e-04,

1.16277879e-04, 6.64511754e-05, 1.91455300e-04, 9.07513968e-05,

1.45928192e-04, 1.78181485e-03, 1.08011079e-03, 1.49311381e-04,

9.82756392e-05, 2.88707030e-04, 2.67076190e-04, 9.56205360e-04,

9.14820994e-05, 1.44630976e-04, 2.71266763e-04, 2.20545771e-04,

3.51296447e-04, 5.65011360e-05, 5.39898028e-05, 3.32881791e-05,

4.18267970e-04, 8.53816455e-04, 1.39340307e-04, 2.61821784e-04,

1.01256774e-04, 6.20011197e-05, 8.18510260e-03, 5.84557885e-04,

3.23629247e-05, 1.49878833e-04, 1.95337387e-04, 1.71919542e-04,

2.35342843e-04, 2.49581831e-03, 1.60848489e-04, 1.09985948e-03,

4.44245707e-05, 1.16778909e-04, 1.92636347e-04, 3.99644450e-05,

6.28446651e-05, 3.98166245e-03, 1.55952876e-04, 2.17046312e-04,

1.09251858e-04, 2.49226135e-03, 1.54479500e-03, 1.25159859e-04,

9.80652621e-05, 7.80950242e-04, 1.25729610e-04, 2.39668138e-04,

3.19829152e-04, 2.43557079e-04, 6.90597994e-03, 1.81299256e-04,

7.97690518e-05, 3.59173209e-05, 7.77634486e-05, 5.12411294e-04,

6.92587346e-04, 9.71015688e-05, 5.37150423e-04, 8.37979460e-05,

1.03847982e-04, 1.64556390e-04, 1.62095050e-04, 7.50828767e-05,

6.85978739e-04, 1.59797564e-04, 1.71349748e-04, 7.46525475e-05,

5.57506050e-04, 7.15658753e-05, 5.18313493e-04, 1.54518042e-04,

7.34301575e-04, 7.41808981e-05, 9.81214471e-05, 1.08507685e-02,

2.20979142e-04, 7.11828982e-03, 1.17054806e-05, 8.21940892e-04,

2.02488227e-05, 6.03395638e-05, 2.55094492e-04, 3.03323497e-04,

5.15690008e-05, 1.75295805e-04, 4.68559418e-04, 1.89823142e-04,

5.47616291e-05, 1.30891727e-04, 2.46041076e-04, 4.26332932e-04,

3.16890655e-04, 4.96258763e-05, 8.59340711e-04, 2.02530529e-04,

4.18328382e-05, 5.38278109e-05, 7.10692402e-05, 1.22153884e-04,

4.86796489e-04, 1.24314654e-04, 7.03770143e-04, 1.04051956e-03,

2.68163021e-05, 6.27119932e-03, 2.30516074e-03, 1.38077943e-04,

4.67849924e-04, 1.64890280e-05, 1.47823477e-04, 9.48119268e-05,

7.49706873e-04, 2.70575456e-05, 2.05066081e-05, 1.48544926e-03,

7.35011272e-05, 1.63713237e-03, 1.29237270e-03, 6.70079084e-04,

3.67125933e-04, 3.29222501e-04, 9.32080293e-05, 1.03922932e-04,

4.79535338e-05, 7.76475863e-05, 1.59768169e-04, 2.87516066e-03,

2.80911103e-03, 7.61366333e-04, 6.05647219e-04, 1.54264562e-04,

2.49425339e-05, 1.76321148e-04, 1.35979441e-03, 2.27449345e-04,

1.73271954e-04, 1.28269999e-03, 7.98516776e-05, 8.44012073e-04,

1.77738373e-04, 2.57999836e-05, 1.64547542e-04, 5.57913503e-04,

1.26685749e-03, 3.86202475e-04, 8.87230679e-04, 7.48189050e-04,

2.13030304e-04, 3.55858996e-04, 8.33153354e-06, 2.95320933e-04,

3.16009100e-05, 2.53893842e-04, 1.18534744e-03, 1.37425945e-04,

8.79636675e-04, 5.70305798e-04, 1.32686793e-04, 2.63076770e-04,

2.84897833e-04, 4.98322945e-04, 1.60985219e-05, 1.27237683e-04,

6.39092177e-04, 2.34655710e-03, 2.54381361e-04, 2.92099867e-05,

8.70297808e-05, 7.05914063e-05, 1.59767864e-04, 8.34528691e-05,

6.28060734e-05, 1.51177555e-05, 1.69857871e-04, 3.63609492e-04,

4.09535496e-05, 6.42370665e-04, 1.83896496e-04, 3.21555126e-05,

9.33920406e-03, 1.51379456e-04, 8.91392294e-04, 7.34808709e-05,

7.63745839e-03, 9.67634551e-05, 3.08589311e-03, 1.76709506e-03,

8.15805033e-05, 2.84980168e-04, 1.37901196e-04, 2.92042911e-04,

5.23895316e-04, 1.24685976e-04, 5.17050910e-04, 2.32969309e-04,

3.13187120e-05, 4.23460988e-05, 3.14876524e-04, 3.53547439e-05,

1.10661949e-03, 1.50967354e-03, 2.42085895e-04, 1.70345920e-05,

1.50748165e-04, 1.34549962e-04, 5.18803572e-05, 9.82871279e-04,

1.07740611e-03, 5.10502650e-05, 9.50180674e-06, 4.26059996e-05,

2.65619456e-04, 7.56132140e-05, 7.09234373e-05, 3.56290082e-04,

2.77788815e-04, 2.11004211e-04, 3.53443623e-03, 4.85317978e-05,

1.16430230e-04, 7.01543409e-04, 1.44020942e-05, 5.91689895e-04,

6.82438840e-05, 6.52912931e-05, 2.92932855e-05, 1.66858081e-04,

5.85521979e-04, 4.82931762e-04, 3.54350952e-04, 8.34171474e-03,

1.62974946e-04, 2.67933850e-04, 1.30400702e-04, 3.10731193e-05,

2.82113149e-04, 6.14081291e-05, 2.89048231e-03, 3.98092088e-04,

1.53062156e-05, 1.85977016e-03, 4.84216580e-04, 1.83015407e-04,

1.53889210e-04, 1.32637697e-05, 4.98024783e-05, 9.33829069e-05,

1.08729248e-04, 1.96910370e-03, 8.92132593e-05, 7.47793456e-05,

3.03913461e-04, 5.80130472e-05, 1.10250810e-04, 3.30982637e-03,

5.04739233e-04, 9.20615275e-05, 7.00237288e-04, 8.91018353e-05,

1.07366790e-03, 2.60756264e-04, 2.11966879e-04, 8.20988615e-04,

2.19961730e-04, 2.33830389e-04, 3.15418845e-04, 4.79362061e-05,

2.69673445e-04, 5.04744712e-05, 5.64143920e-05, 5.33246894e-05,

6.90017478e-05, 1.36698000e-04, 2.83982721e-04, 8.47348856e-05,

5.74015139e-05, 1.15665382e-04, 4.05571511e-04, 4.68011480e-04,

4.67822865e-05, 3.00760876e-04, 1.67407416e-04, 3.02288918e-05,

1.11583337e-04, 1.59645104e-04, 1.73546228e-04, 6.30670751e-04,

1.02046807e-03, 2.51042400e-03, 1.16524985e-04, 7.50074469e-05,

8.18201734e-05, 1.62838216e-04, 2.80639011e-04, 2.22368413e-04,

1.39261465e-04, 7.51803600e-05, 3.63202620e-04, 4.17022275e-05,

3.47620167e-04, 5.77659339e-05, 4.18579002e-05, 2.42096503e-05,

1.12250843e-03, 2.84655980e-04, 1.09178177e-03, 2.29647441e-04,

8.82061548e-04, 2.46612704e-04, 2.03358708e-04, 1.37021038e-04,

2.18374873e-04, 2.04092226e-04, 2.34132949e-05, 3.30167168e-05,

9.79309698e-05, 5.24312258e-04, 5.23222424e-03, 4.28866944e-04,

4.27278283e-04, 4.20961696e-05, 7.91574595e-04, 1.49085918e-05,

6.50748750e-03, 1.34461065e-04, 2.10423168e-04, 2.58454558e-04,

6.88945584e-05, 2.52605474e-04, 1.82618242e-05, 7.12760302e-05,

2.55153718e-04, 2.36239048e-05, 1.01488891e-04, 1.98719892e-04,

3.77983743e-05, 7.20363343e-04, 9.62147082e-04, 1.28874002e-04,

9.41858088e-05, 3.23168957e-03, 1.23727470e-04, 1.92188854e-05,

3.54324962e-04, 3.18146122e-05, 7.00732926e-04, 6.85932173e-05,

2.01224640e-04, 6.98182499e-03, 4.59520757e-04, 9.72801761e-04,

5.03679446e-04, 4.76087164e-03, 3.34953744e-04, 1.01442929e-04,

9.72617563e-05, 1.41746947e-04, 7.29511899e-04, 3.49429640e-04,

2.15930704e-05, 6.75361225e-05, 3.15851881e-04, 6.48199712e-05,

3.88102460e-04, 1.08534540e-03, 3.40335624e-04, 7.11025714e-05,

2.24293879e-04, 1.50417589e-04, 2.75202565e-05, 5.46244482e-05,

4.36935152e-05, 2.22663773e-04, 2.66235329e-05, 5.41452435e-04,

3.01329768e-03, 6.68641151e-05, 2.13318184e-04, 4.83280281e-04,

3.25042219e-03, 2.68050027e-03, 2.30311023e-04, 8.69394498e-05,

2.22041956e-04, 3.05501686e-04, 2.05413511e-04, 1.23969074e-02,

1.59675707e-04, 8.81001033e-05, 1.13639137e-04, 5.50219047e-05,

7.88093021e-05, 2.22792616e-04, 4.43840865e-04, 1.43152502e-04,

1.66197169e-05, 3.35655146e-04, 5.74437363e-05, 1.59689171e-05,

6.05816713e-05, 3.97622352e-04, 3.04213725e-03, 1.58328272e-04,

1.24343760e-05, 9.89821274e-04, 2.06074947e-05, 7.68100668e-04,

3.63771833e-04, 1.09289220e-04, 9.56191216e-05, 2.01528255e-05,

2.48080294e-04, 1.70826330e-04, 3.17801314e-05, 8.31752113e-05,

1.45166923e-04, 3.32538475e-05, 4.35978654e-05, 2.31716942e-04,

9.36048309e-05, 1.46375887e-05, 5.24211209e-05, 4.74207263e-05,

5.80011001e-05, 1.25948922e-04, 6.51396252e-03, 8.12801300e-04,

5.31415171e-05, 1.57267496e-03, 6.40222663e-03, 7.39321840e-05,

4.70565539e-03, 1.65742051e-04, 1.82069867e-04, 8.12231665e-05,

2.21989758e-05, 6.43523454e-05, 1.68223705e-04, 2.12641185e-04,

4.13967442e-04, 6.86593557e-05, 3.99616620e-05, 3.24723987e-05,

5.34793035e-05, 1.45994454e-05, 8.81493106e-05, 1.56545924e-04,

2.27955333e-03, 4.21397563e-04, 4.95772947e-05, 2.61580717e-04,

1.35582286e-05, 3.30929557e-04, 2.16315282e-04, 6.19240600e-05,

2.29309764e-04, 1.89436760e-05, 9.95220253e-05, 1.30594799e-05,

2.31584621e-04, 2.58677243e-03, 3.26315960e-04, 1.45622762e-04,

1.95064305e-04, 7.66519734e-05, 1.00914447e-03, 1.00372148e-04,

6.48575078e-05, 1.86936624e-04, 1.26099811e-04, 5.64772126e-05,

3.31675867e-04, 2.09970924e-04, 1.60944095e-04, 5.30265497e-05,

1.47023078e-04, 7.85566168e-04, 7.93441359e-05, 1.78703503e-03,

4.36751638e-04, 1.18882686e-04, 1.46988168e-04, 6.06477435e-04,

6.12383519e-05, 8.20263958e-05, 4.62990356e-05, 4.65407902e-05])

[26]:

shap_values.output_names

[26]:

['American_egret',

'crane',

'little_blue_heron',

'flamingo',

'peacock',

'goose',

'white_stork',

'spoonbill',

'black_stork',

'pelican',

'albatross',

'limpkin',

'bustard',

'drake',

'bittern',

'king_penguin',

'black_swan',

'eel',

'jay',

'bee_eater',

'weasel',

'lakeside',

'magpie',

'red-breasted_merganser',

'vulture',

'crayfish',

'ostrich',

'water_ouzel',

'hook',

'Italian_greyhound',

'vine_snake',

'nematode',

'paper_towel',

'redshank',

'gazelle',

'balance_beam',

'dragonfly',

'dingo',

'chambered_nautilus',

'coil',

'macaw',

'dowitcher',

'brain_coral',

'ice_bear',

'toilet_tissue',

'mushroom',

'European_gallinule',

'damselfly',

'bubble',

'sea_snake',

'hognose_snake',

'oystercatcher',

'fountain_pen',

'coral_reef',

'green_snake',

'Siamese_cat',

'sundial',

'ruddy_turnstone',

'paintbrush',

'umbrella',

'American_chameleon',

'coral_fungus',

'rubber_eraser',

'gar',

'ant',

'American_coot',

'fox_squirrel',

'snail',

'Ibizan_hound',

'earthstar',

'jacamar',

'hummingbird',

'quill',

'chain',

'red-backed_sandpiper',

'pole',

'sandbar',

'goblet',

'fountain',

'knot',

'bighorn',

'Granny_Smith',

'chime',

'coffee_mug',

'bald_eagle',

'hen-of-the-woods',

'barrow',

'banana',

'anemone_fish',

'syringe',

'indigo_bunting',

'slug',

'starfish',

'nipple',

'remote_control',

'bathing_cap',

'ram',

'crossword_puzzle',

'pencil_sharpener',

'hornbill',

'African_chameleon',

'sea_urchin',

'goldfinch',

'thunder_snake',

'monitor',

'ping-pong_ball',

'bath_towel',

'sulphur-crested_cockatoo',

'ringneck_snake',

'horizontal_bar',

'chainlink_fence',

'Eskimo_dog',

'rule',

'cowboy_hat',

'tick',

'parallel_bars',

'African_elephant',

'whippet',

'meerkat',

'boa_constrictor',

'lorikeet',

'padlock',

'impala',

'thimble',

'bonnet',

'sea_slug',

'sidewinder',

'golf_ball',

'park_bench',

'bucket',

'horned_viper',

'quail',

'bannister',

'ballplayer',

'loudspeaker',

'Band_Aid',

'Labrador_retriever',

'Indian_elephant',

'binoculars',

'ptarmigan',

'kite',

'plastic_bag',

'proboscis_monkey',

'scorpion',

'iPod',

'lotion',

'bolete',

'ear',

'dishwasher',

'lampshade',

'birdhouse',

'flagpole',

'ski',

'sunscreen',

'polecat',

'letter_opener',

'washbasin',

'Egyptian_cat',

'bee',

'green_lizard',

'cup',

'handkerchief',

'French_horn',

'rapeseed',

'bassoon',

'grocery_store',

'canoe',

'bib',

'toucan',

'sea_lion',

'swimming_trunks',

'bell_pepper',

'sombrero',

'orange',

'parking_meter',

'lens_cap',

'screw',

'leatherback_turtle',

'dumbbell',

'barbell',

'tub',

'Weimaraner',

'black-footed_ferret',

'gibbon',

'pick',

'Saluki',

'mailbox',

'Petri_dish',

'whistle',

'macaque',

'paddle',

'gown',

'bearskin',

'ladle',

'toy_terrier',

'titi',

'web_site',

'orangutan',

'worm_fence',

'partridge',

'sock',

'dalmatian',

'feather_boa',

'Siberian_husky',

'siamang',

'velvet',

'digital_clock',

'hatchet',

'measuring_cup',

'stingray',

'stinkhorn',

'volleyball',

'bow',

'electric_fan',

'notebook',

'flute',

'television',

'butternut_squash',

'banded_gecko',

'sea_anemone',

'maillot',

'pretzel',

'alligator_lizard',

'agama',

'tennis_ball',

'trombone',

'pitcher',

'stage',

'crutch',

'soccer_ball',

'hard_disc',

'pedestal',

'analog_clock',

'golden_retriever',

'wing',

'honeycomb',

'shopping_cart',

'green_mamba',

'basenji',

'rugby_ball',

'platypus',

'can_opener',

"yellow_lady's_slipper",

'lab_coat',

'oboe',

'rock_python',

'Bedlington_terrier',

'matchstick',

'mink',

'crane',

'wooden_spoon',

'trimaran',

'mongoose',

'bolo_tie',

'robin',

'church',

'table_lamp',

'running_shoe',

'African_grey',

'seashore',

'hand-held_computer',

'mortarboard',

'mixing_bowl',

'conch',

'lemon',

'ruffed_grouse',

'tripod',

'ice_lolly',

'drumstick',

'butcher_shop',

'toyshop',

'chickadee',

'borzoi',

'boathouse',

'strainer',

'black_grouse',

'loupe',

'lynx',

'pinwheel',

'rocking_chair',

'stopwatch',

'Arctic_fox',

'cellular_telephone',

'neck_brace',

'bagel',

'stole',

'brambling',

'collie',

'walking_stick',

'hare',

'hammerhead',

'window_screen',

'suspension_bridge',

'schooner',

'hair_slide',

'doormat',

'badger',

'house_finch',

'hog',

'dock',

'hair_spray',

'racket',

'Indian_cobra',

'night_snake',

'corn',

'rain_barrel',

'sunglass',

'nail',

'beagle',

'vizsla',

'beaver',

'groenendael',

'bloodhound',

'electric_ray',

'piggy_bank',

'diaper',

'maillot',

'fiddler_crab',

'fly',

'otter',

'mouse',

'computer_keyboard',

'printer',

'Boston_bull',

'desktop_computer',

'typewriter_keyboard',

'Doberman',

'beaker',

'mousetrap',

'carton',

'swing',

'convertible',

'red_fox',

'breakwater',

'mask',

'beacon',

'water_snake',

'sunglasses',

'barbershop',

'dough',

'English_foxhound',

'coucal',

'screen',

'jeep',

'ringlet',

'reel',

'squirrel_monkey',

'water_bottle',

'American_Staffordshire_terrier',

'obelisk',

'grasshopper',

'wallaby',

'airliner',

'wig',

'Irish_setter',

'centipede',

'boxer',

'street_sign',

'cash_machine',

'jean',

'plunger',

'minivan',

'mosque',

'cabbage_butterfly',

'microphone',

'lion',

'eggnog',

'espresso',

'car_mirror',

'pineapple',

'toilet_seat',

'snorkel',

'common_newt',

'miniature_poodle',

'flat-coated_retriever',

'file',

'spaghetti_squash',

'standard_poodle',

'beach_wagon',

'junco',

'fur_coat',

'croquet_ball',

'tricycle',

'combination_lock',

'mobile_home',

'wall_clock',

'Great_Dane',

'zebra',

'face_powder',

'daisy',

'balloon',

'Cardigan',

'pillow',

'washer',

'spindle',

'schipperke',

'redbone',

'harmonica',

'miniature_pinscher',

'ice_cream',

'revolver',

'swab',

'echidna',

'seat_belt',

'power_drill',

'window_shade',

'kuvasz',

'bow_tie',

'rock_beauty',

'hammer',

'screwdriver',

'prayer_rug',

'admiral',

'water_jug',

'cardigan',

'hen',

'strawberry',

'warplane',

'perfume',

'buckeye',

'spotlight',

'gong',

'wine_bottle',

'guenon',

'armadillo',

'photocopier',

'pickelhaube',

'ski_mask',

'bikini',

'coyote',

'bulbul',

'jinrikisha',

'langur',

'chain_mail',

'loggerhead',

'shield',

'gorilla',

'hay',

'Greater_Swiss_Mountain_dog',

'Pomeranian',

'torch',

'puffer',

'potpie',

'flatworm',

'tobacco_shop',

'picket_fence',

'digital_watch',

'unicycle',

'sarong',

'dugong',

'desk',

'space_shuttle',

'hoopskirt',

'patio',

'dhole',

'tray',

'speedboat',

'Rottweiler',

'triceratops',

'Komodo_dragon',

'punching_bag',

'Chihuahua',

'medicine_chest',

'tabby',

'wild_boar',

'shoji',

'corkscrew',

'custard_apple',

'llama',

'hip',

'marmoset',

'sliding_door',

'red_wolf',

'cicada',

'mashed_potato',

'hermit_crab',

'pier',

'sweatshirt',

'tusker',

'Arabian_camel',

'white_wolf',

'plow',

'soup_bowl',

'kimono',

'malinois',

'maze',

'hamper',

'promontory',

'bassinet',

'cock',

'diamondback',

'knee_pad',

'baseball',

'oscilloscope',

'crash_helmet',

'trifle',

'frilled_lizard',

'space_bar',

'golfcart',

'lighter',

'crib',

'crate',

'jellyfish',

'cardoon',

'agaric',

'prairie_chicken',

'beer_bottle',

'lawn_mower',

'cucumber',

'Irish_terrier',

'tiger_beetle',

'plate',

'football_helmet',

'academic_gown',

'radio',

'wool',

'German_shepherd',

'quilt',

'leafhopper',

'violin',

"carpenter's_kit",

'ox',

'Norfolk_terrier',

'scuba_diver',

'dome',

'pickup',

'cliff',

'spiny_lobster',

'shovel',

'CD_player',

'West_Highland_white_terrier',

'military_uniform',

'fireboat',

'basset',

'lipstick',

'ashcan',

'briard',

'skunk',

'purse',

'Great_Pyrenees',

'axolotl',

'projectile',

'vacuum',

'ocarina',

'groom',

'shower_curtain',

'whiptail',

'lionfish',

'spider_web',

'binder',

'Border_collie',

'pot',

'palace',

'isopod',

'sax',

'sewing_machine',

'teddy',

'lycaenid',

'capuchin',

'brown_bear',

'indri',

'kelpie',

'ballpoint',

'shower_cap',

'cab',

'gas_pump',

'artichoke',

'book_jacket',

'plane',

'Madagascar_cat',

'chimpanzee',

'colobus',

'Staffordshire_bullterrier',

'Rhodesian_ridgeback',

'pirate',

'giant_panda',

'ladybug',

'great_grey_owl',

'cradle',

'malamute',

'clumber',

'head_cabbage',

'necklace',

'pay-phone',

'switch',

'broccoli',

'wok',

'spatula',

'backpack',

'soap_dispenser',

'shoe_shop',

'scoreboard',

'recreational_vehicle',

'cocker_spaniel',

'upright',

'totem_pole',

'sturgeon',

'papillon',

'barrel',

'terrapin',

'dishrag',

'cauliflower',

'Samoyed',

'geyser',

'beer_glass',

'grille',

'patas',

'bathtub',

'dung_beetle',

'Christmas_stocking',

'sorrel',

'grey_fox',

'mountain_tent',

'laptop',

'missile',

'parachute',

'restaurant',

'guillotine',

'cloak',

'planetarium',

'hartebeest',

'passenger_car',

'Airedale',

'kit_fox',

'studio_couch',

'Leonberg',

'jackfruit',

'cornet',

'Australian_terrier',

'rifle',

'rhinoceros_beetle',

'wolf_spider',

'barracouta',

'gondola',

'Gordon_setter',

'viaduct',

'mitten',

'Shih-Tzu',

'shopping_basket',

'panpipe',

'packet',

'Maltese_dog',

'grand_piano',

'Angora',

'hamster',

'ground_beetle',

'wallet',

'French_loaf',

'sandal',

'drum',

'carbonara',

'magnetic_compass',

'Gila_monster',

'greenhouse',

'catamaran',

'gasmask',

'cricket',

'odometer',

'iron',

'weevil',

'espresso_maker',

'mantis',

'cairn',

'stethoscope',

'castle',

'puck',

'fire_screen',

'monarch',

'scale',

'reflex_camera',

'gyromitra',

'spotted_salamander',

'muzzle',

'pop_bottle',

'oxcart',

'wood_rabbit',

'bell_cote',

'safety_pin',

'chiton',

'howler_monkey',

'sulphur_butterfly',

'miniskirt',

'tailed_frog',

'jaguar',

'miniature_schnauzer',

'bakery',

'bicycle-built-for-two',

'sloth_bear',

'porcupine',

'hand_blower',

'abacus',

'horse_cart',

'abaya',

'stupa',

'lacewing',

'pencil_box',

'oil_filter',

'Kerry_blue_terrier',

'pill_bottle',

'suit',

'leopard',

'solar_dish',

'lesser_panda',

'barber_chair',

'safe',

'pomegranate',

'Walker_hound',

'Dutch_oven',

'garter_snake',

'Pekinese',

'volcano',

'pajama',

'folding_chair',

'cello',

'tiger_cat',

'lumbermill',

'vault',

'chocolate_sauce',

'soft-coated_wheaten_terrier',

'chain_saw',

'cockroach',

'stone_wall',

'trolleybus',

'carousel',

'alp',

'moped',

'comic_book',

'tile_roof',

'acorn',

'water_tower',

'cocktail_shaker',

'mosquito_net',

'English_setter',

'prison',

'library',

'microwave',

'three-toed_sloth',

'maypole',

'coho',

'Afghan_hound',

'bottlecap',

'liner',

'eft',

'hourglass',

'motor_scooter',

'harp',

'Yorkshire_terrier',

'mountain_bike',

'consomme',

'cinema',

'joystick',

"jack-o'-lantern",

'bookshop',

'space_heater',

'accordion',

'disk_brake',

'toy_poodle',

'sea_cucumber',

'tarantula',

'Saint_Bernard',

'dial_telephone',

'buckle',

'basketball',

'fire_engine',

'teapot',

'paddlewheel',

'cougar',

'vase',

'Brabancon_griffon',

'menu',

'container_ship',

'bookcase',

'jersey',

'triumphal_arch',

'minibus',

'long-horned_beetle',

'marmot',

'Irish_water_spaniel',

'home_theater',

'Norwich_terrier',

'trailer_truck',

'guacamole',

'king_snake',

'Norwegian_elkhound',

'standard_schnauzer',

'Appenzeller',

'chiffonier',

'go-kart',

'Pembroke',

'bull_mastiff',

'bullet_train',

'acoustic_guitar',

'guinea_pig',

'Sussex_spaniel',

'wreck',

'bluetick',

'forklift',

'Blenheim_spaniel',

'killer_whale',

'coffeepot',

'pool_table',

'airship',

'vestment',

'Chesapeake_Bay_retriever',

'Scotch_terrier',

'Mexican_hairless',

'jigsaw_puzzle',

'American_alligator',

'tractor',

'rock_crab',

'Persian_cat',

'French_bulldog',

'black_widow',

'school_bus',

'African_crocodile',

'bobsled',

'radiator',

'chest',

'projector',

'African_hunting_dog',

'electric_guitar',

'grey_whale',

'Newfoundland',

'tiger_shark',

'steel_arch_bridge',

'cowboy_boot',

'zucchini',

'Welsh_springer_spaniel',

'barn',

'traffic_light',

'bulletproof_vest',

'turnstile',

"potter's_wheel",

'spider_monkey',

'goldfish',

'confectionery',

'whiskey_jug',

'Brittany_spaniel',

'burrito',

'ibex',

'trilobite',

'steel_drum',

'Border_terrier',

'hotdog',

'cheetah',

'mud_turtle',

'dining_table',

'cannon',

'electric_locomotive',

'plate_rack',

'toaster',

'Scottish_deerhound',

'megalith',

'milk_can',

'racer',

'Shetland_sheepdog',

'oxygen_mask',

'dogsled',

'cassette',

'entertainment_center',

'thresher',

'candle',

'saltshaker',

'tape_player',

'brassiere',

'envelope',

'fig',

'EntleBucher',

'harvestman',

'Old_English_sheepdog',

'Sealyham_terrier',

'barometer',

'thatch',

'streetcar',

'Crock_Pot',

'ambulance',

'submarine',

'red_wine',

'car_wheel',

'common_iguana',

'vending_machine',

'pug',

'acorn_squash',

'water_buffalo',

'baboon',

'European_fire_salamander',

'brass',

'cuirass',

'maraca',

'waffle_iron',

'black-and-tan_coonhound',

'Loafer',

'broom',

'slide_rule',

'hyena',

'chow',

'sleeping_bag',

'mailbag',

'banjo',

'cleaver',

'yawl',

'aircraft_carrier',

'tiger',

'timber_wolf',

'slot',

'Polaroid_camera',

'Japanese_spaniel',

'snow_leopard',

'box_turtle',

'Dandie_Dinmont',

'poncho',

'giant_schnauzer',

'English_springer',

'rotisserie',

'refrigerator',

'Irish_wolfhound',

'koala',

'modem',

'silky_terrier',

'stove',

'stretcher',

'Tibetan_terrier',

'cliff_dwelling',

'Dungeness_crab',

'leaf_beetle',

'American_lobster',

'steam_locomotive',

'bullfrog',

'cassette_player',

'Model_T',

'Tibetan_mastiff',

'wardrobe',

'sports_car',

'apiary',

'valley',

'half_track',

'great_white_shark',

'black_and_gold_garden_spider',

'theater_curtain',

'tree_frog',

'snowmobile',

'Windsor_tie',

'assault_rifle',

'wombat',

'frying_pan',

'Bernese_mountain_dog',

'Lhasa',

'freight_car',

'altar',

'harvester',

'garbage_truck',

'Bouvier_des_Flandres',

'throne',

'yurt',

'garden_spider',

'four-poster',

'tow_truck',

'pizza',

'meat_loaf',

'china_cabinet',

'scabbard',

'marimba',

'tank',

'clog',

'warthog',

'wire-haired_fox_terrier',

'tench',

'organ',

'curly-coated_retriever',

'king_crab',

'komondor',

'police_van',

'apron',

'barn_spider',

'radio_telescope',

'hippopotamus',

'Lakeland_terrier',

'American_black_bear',

'mortar',

'hot_pot',

'German_short-haired_pointer',

'monastery',

'otterhound',

'dam',

'overskirt',

'trench_coat',

'affenpinscher',

'bison',

'holster',

'breastplate',

'keeshond',

'caldron',

'limousine',

'moving_van',

'manhole_cover',

'drilling_platform',

'amphibian',

'snowplow',

'cheeseburger',

'lifeboat']

[ ]:

[20]:

# output with shap values

shap.image_plot(shap_values[0])

---------------------------------------------------------------------------

Exception Traceback (most recent call last)

<ipython-input-20-b98c3ef2fd6b> in <module>

1 # output with shap values

----> 2 shap.image_plot(shap_values[0])

~/projects/shap/shap/plots/_image.py in image(shap_values, pixel_values, labels, width, aspect, hspace, labelpad, show)

57 shap_values = [shap_exp.values[..., i] for i in range(shap_exp.values.shape[-1])]

58 else:

---> 59 raise Exception("Number of outputs needs to have support added!! (probably a simple fix)")

60 if pixel_values is None:

61 pixel_values = shap_exp.data

Exception: Number of outputs needs to have support added!! (probably a simple fix)

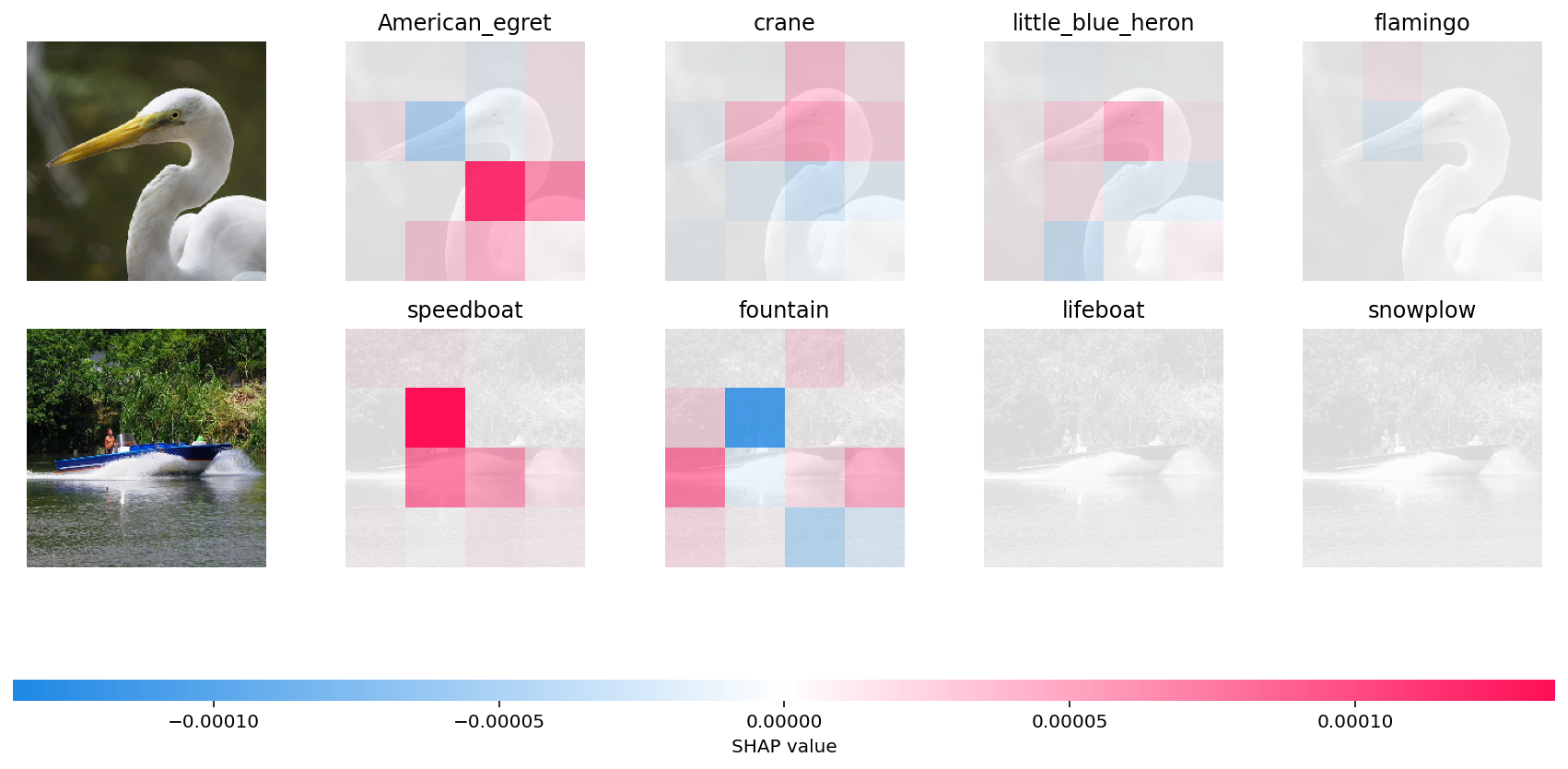

In the first example, given bird image is classified as an American Egret with next probable classes being a Crane, Heron and Flamingo. It is the “bump” over the bird’s neck that causes it to be classified as an American Egret vs a Crane, Heron or a Flamingo. You can see the neck region of the bird appropriately highlighted in red super pixels.

In the second example, it is the shape of the boat which causes it to be classified as a speedboat instead of a fountain, lifeboat or snowplow (appropriately highlighted in red super pixels).

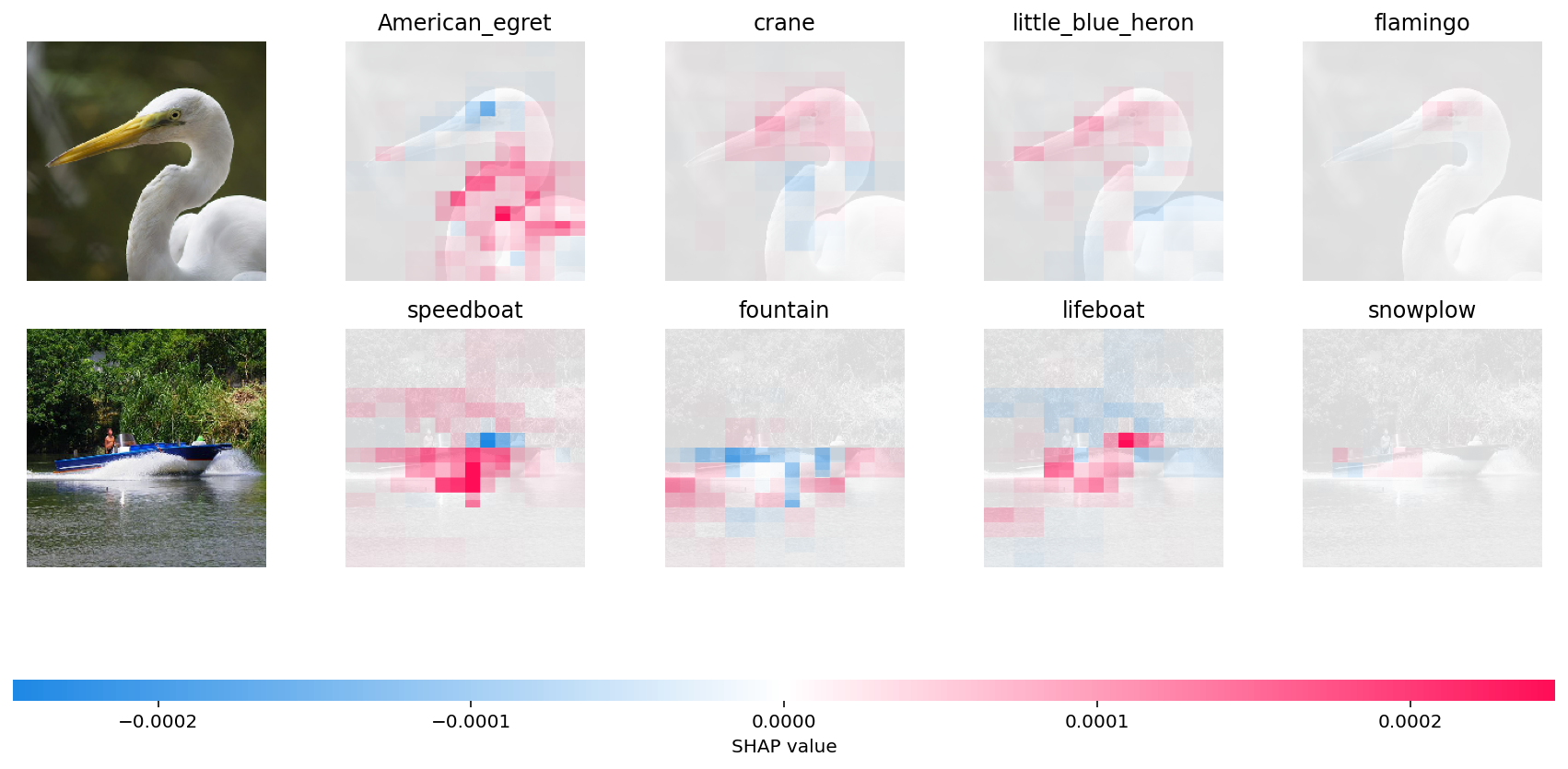

A longer run with many evaluations

By increasing the max_evals parameter we let SHAP execute the original model more times and so get a more finely detailed explaination. We also use the blur kernel here, both to demonstrate it, and because it is much faster than inpainting. Note that this will take a while if you are not using a modern GPU on your system.

[6]:

# python function to get model output; replace this function with your own model function.

def f(x):

tmp = x.copy()

preprocess_input(tmp)

return model(tmp)

# define a masker that is used to mask out partitions of the input image.

masker_blur = shap.maskers.Image("blur(128,128)", X[0].shape)

# create an explainer with model and image masker

explainer_blur = shap.Explainer(f, masker_blur, output_names=class_names)

# here we explain two images using 500 evaluations of the underlying model to estimate the SHAP values

shap_values_fine = explainer_blur(

X[1:3], max_evals=5000, batch_size=50, outputs=shap.Explanation.argsort.flip[:4]

)

Partition explainer: 3it [00:17, 5.94s/it]

[7]:

# output with shap values

shap.image_plot(shap_values_fine)

Have an idea for more helpful examples? Pull requests that add to this documentation notebook are encouraged!